The Ocean Health Index Conduct Phase

- 1 Introduction

- 1.1 Where you are in the OHI+ process

- 1.2 Outcomes of conducting an assessment

- 1.3 Best practices for OHI assessments

- 1.3.1 Incorporate core values and characteristics into the OHI assessment framework before gathering information

- 1.3.2 Maintain core values and characteristics within the assessment framework regardless of limited information quality

- 1.3.3 Strategically define spatial boundaries balance information availability and decision-making scales

- 1.3.4 Carefully document and share all decisions in writing and computational code

- 1.4 The OHI Toolbox

- 2 Requesting your repositories

- 3 Starter Repository

- 3.1 Discovering and gathering input information

- 3.1.1 Thinking creatively

- 3.1.2 Data sources

- 3.1.3 Gathering responsibilities

- 3.1.4 Requirements for data and indicators

- 3.1.5 Relevance to ocean health

- 3.1.6 Accessibility

- 3.1.7 Quality

- 3.1.8 Reference point

- 3.1.9 Appropriate spatial scale

- 3.1.10 Appropriate temporal scale

- 3.1.11 The process of information discovery

- 3.2 Formatting Data for the Toolbox

- 3.3 Defining spatial boundaries

- 3.4 Developing Goal Models, Reference Points, and Pressures and Resilience

- 3.1 Discovering and gathering input information

- 4 Full Repository

- 5 Saving and Registering Data Layers

- 6 Modifying goal models

- 7 Modifying Pressures and Resilience

- 8 Removing goals and sub-goals

- 9 Calculate overall OHI Index Scores

- 10 Toolbox Troubleshooting

- 11 Appendix 1: Toolbox Software

- 12 Appendix 2: Goal descriptions

- 13 Appendix 3: R Tutorials for OHI

- 14 Appendix 4: Frequently Asked Questions (FAQs)

Welcome to the Conduct Phase of your OHI+ assessment! This manual contains essential information on how to complete your own OHI+ assessment. It should be used by the technical team that will gather and organize data, prepare data, and develop goal models to then calculate OHI scores. Transparency, collaboration, and communication are important throughout the entire process, and the OHI Toolbox workflow facilitates these important principles.

The first sections will provide conceptual and technical guidance for all participants. It contains information on OHI philosophy, what to expect when conducting an OHI+ assessment, best practices, and an introduction to the OHI Toolbox. Transparency and repeatability is critical during the data gathering, organization, and preparation stage, not only to successfully complete and communicate the current assessment, but to enable repeated assessments through time. More details on Goal Model Development and Pressure and Resilience, How to report data layers and model descriptions, and Frequently Asked Questions are included in the Appendixes. The remaining sections of the manual provide step-by-step instructions on how to use the toolbox and troubleshoot, and will be most helpful to the toolbox master.

This manual should be used in conjuction with our other web materials, including Four Phases of OHI+, Presentations, and our community Forum.

Citation: Ocean Health Index. 2016. Ocean Health Index Assessment Manual. National Center for Ecological Analysis and Synthesis, University of California, Santa Barbara. Available at: ohi-science.org/manual. Download PDF version

NOTE: If you are conducting an OHI+ assessment and/or have downloaded the OHI repository prior to 2016, please cite: Ocean Health Index. 2015. Ocean Health Index Toolbox Manual [date]. National Center for Ecological Analysis and Synthesis, University of California, Santa Barbara. Available at: https://github.com/OHI-Science/ohi-science.github.io/raw/master/assets/downloads/other/ohi-manual-2015.pdf

1 Introduction

1.1 Where you are in the OHI+ process

The OHI+ process consists of four phases. In the first phase, you learned about the OHI to understand the philosophy behind the goals and the motivation for conducting an assessment. In the second phase, you actively planned to conduct your OHI+ assessment. Now you will actively conduct the assessment by engaging with the work of finding the data, preparing the goal models, and taking the necessary steps to learn how to use the OHI Toolbox and related software to produce final scores. This is where the science of data discovery and goal model development comes in, with important emphasis on transparency, collaboration, and communication. In the final phase, you will communicate the findings and results of your assessment with others.

The OHI framework allows you to synthesize the information and priorities relevant to your local context and produce comparable scores. Because the methods of the framework are repeatable, transparent, quantitative, and goal-driven, the process of a carrying out an OHI+ assessment is as valuable as the final results.

The first completed assessment for a study area is valuable because it establishes a baseline and highlights the state of information quality and availability in an area. Any subsequent assessments carried out through time are also valuable because they can be used to track and monitor changes in ocean health. Your assessment will require careful thought and consideration along the way, and the OHI Toolbox and workflow facilitates collaboration and transparency. Transparency throughout the OHI workflow will help track the decisions made during assessment calculations and will enable repeatability for future assessments.

Each OHI+ assessment should have a clear purpose. One of the typical reasons for conducting an independent assessment is to inform policy and management decisions. Assessments can be more relevant to management when they are conducted at the spatial scales at which policy decisions are made, such as states, provinces, or counties. Regions and study area are terms that will be used throughout the assessment. The study area is the entire spatial boundary of your assessment, while the regions are the smaller subdivisions within the study area. In the OHI framework, goal scores are calculated for regions separately and then combined to produce an overall OHI score for each study area. The number of regions varies with each assessment’s study area; completed assessments have had between one and 220 regions.

The process of conducting an OHI+ assessment is as valuable as the final results. Documenting decisions made, as well as the challenges and successes encountered along the way, can lead to better understanding of the system, help inform management decisions, and guide future assessments to track changes through time.

When conducting an OHI+ assessment, it is important to include information that best represents your study area, and to make science-driven decisions and clearly document what was done and why. Your team should as creative and insightful as you can be while working within the bounds of informational and technical limitations.

There are key processes and considerations that will be a part of every assessment. Every assessment should ideally build from the lessons learned of previously completed assessments and identify what local characteristics need to be included in a study. This is done partly by comparing local characteristics to characteristics in previous assessments, including Global and OHI+ assessments. After you have outlined and identified local characteristics and priorities, you will gather information, prepare data, begin to develop models and set reference points, and then calculate scores. This will be done with the OHI Toolbox and workflow that will help you collaboratively organize and complete your assessment transparently, in part through your own OHI+ website that can be shared with other partners and collaborators. Above all, you should be prepared to know that this process takes time and is iterative, meaning that you often return to previous steps.

How long does an assessment take? Past assessments have taken between two and three years, with the time varying depending the size and composition of the team, the challenges encountered in discovering and gathering information, and how many models are redeveloped. The amount of data processing and goal model development needed before you will be able to use the Toolbox also affects the amount of time it takes to conduct the assessment. The skill sets of the team members and the amount of technical resources available are also hugely important factors. You should think about which team members are needed throughout the process, including R programmers and spatial analysts. It will take time for the technical team to become familiar with the OHI Toolbox and GitHub.

1.2 Outcomes of conducting an assessment

Your completed assessment will produce OHI scores for each goal for every region in your study area, and scores within the assessment can be compared with each other. These scores will not be quantitatively comparable to those of other OHI assessments because they differ in the underlying inputs, goal models, and reference points. The only quantitative comparisons can be made within an assessment’s study area, whether between regions or through time (following repeated assessments). However, qualitative comparisons between different OHI assessments can be made because the scores are an indication of how far a region is to achieving its own targets. For instance, if two study areas have scores of seventy and sixty-five, it should be interpreted that the first study area is closer to its management targets than the second is, but since these management targets are different (in addition to the underlying data and models), they cannot be quantitatively compared.

While final OHI scores are valuable information, the process of conducting an OHI assessment can be as valuable as the final results. This is because during an OHI assessment you will bring together meaningful ocean health information from many disciplines. In doing so, you will have a census of existing information and will also identify knowledge and data gaps. Further, conducting an OHI+ assessment can engage many different groups, including research institutions, government agencies, policy groups, non-governmental organizations, and both the civil and private sectors.

1.3 Best practices for OHI assessments

Conducting an assessment requires both an understanding of how past assessments have been completed and the innovation to capture important characteristics of your study area using the information available. You can start by understanding the structure of completed assessments at global and smaller scales and the models that were created. Understanding the approaches in different contexts will help you think about what should be done similarly and differently in your local context. Information, publications, and websites for completed OHI+ assessments are listed at ohi-science.org/projects, and example approaches for each goal are listed at ohi-science.org/goals.

The following Best Practices are from our publication Best practices for assessing ocean health in multiple contexts using tailorable frameworks which is important to read before beginning your assessment.

Best practices of OHI+ assessments

1.3.1 Incorporate core values and characteristics into the OHI assessment framework before gathering information

Begin your assessment by identifying local socio-cultural-economic characteristics and priorities related to ocean health, and how they would ideally be captured with the existing or modified OHI framework. This means understanding the rationale behind the components of the OHI framework and identifying what must be added or removed or redefined to ensure that it best represents the local context. Are all goals relevant to your study area? What should be added, removed, or redefined? In this process it is important to identify not only characteristics that could be included in goal models, but also the important stressors (pressures) and resilience elements within the study area. What are the key issues that should be included for your assessment to be credible, useful, and meaningful? How do people typically relate to the ocean in your area in terms of social and cultural patterns? These are the kinds of questions you should consider prior to assembling the available information.

The OHI framework should guide your assessment, but you should not be constrained by it. If a goal is not relevant, it should be removed. If there are elements important to your study area that are not present within the existing framework, how could they be included? Having a clear picture of how the framework should be restructured and what the assessment should include is very important before moving on to assemble information, because otherwise the assessment could be biased by what information is available instead of what is important to include. When specific information is limited there are ways to capture them with indirect measures.

1.3.2 Maintain core values and characteristics within the assessment framework regardless of limited information quality

The assessment framework can be implemented using the best freely-available existing information, even if the information available is ‘limited’ or not ‘ideal’. ‘Limited’ information may be of low quality, have gaps, or be indirectly obtained through modeling instead of being directly measured. Different methods can be used to work with limited data, such as gap filling, incorporating indirect (proxy) or place-holder information, or using intermediate models.

Remaining true to the conceptual framework by using those methods, hence developing less-than-ideal goal models, provides a fuller picture than redesigning it to only include characteristics where ideal information is available. This is because all key characteristics in the system should be represented somehow in a comprehensive assessment, even if assumptions must be made to compensate for missing information. If these methods, including assumptions and rationales, are clearly considered and explained, completed assessments will not only provide the best possible picture of the current system but will also identify information gaps and highlight areas for improvement. Such scrutiny of available knowledge could be lost if important elements were simply excluded from the assessment due to imperfect representation.

1.3.3 Strategically define spatial boundaries balance information availability and decision-making scales

Identifying the spatial boundaries of the Regions within the Assessment Area is extremely important because OHI scores are calculated for each unique Region, and the boundaries will be used to aggregate or disaggregate input information reported at different spatial scales. Spatial boundaries should be defined with geographic information system (GIS) mapping software, ideally per management jurisdiction (see Defining spatial boundaries section for technical guidance). Jurisdictional boundaries are optimal because it is often at these scales where management and policy decisions are made, cultural priorities and management targets are identified, and information is collected in standardized and therefore comparable ways.

Within the OHI framework, there is no limit to the number of Regions that can exist within the Assessment Area; the number is only constrained by data availability and the utility of having scores calculated for a particular Region. Although it is possible to assess only one region in the study area (i.e. the region is the assessment area), this might not be ideal because it eliminates the possibility of making comparisons or identifying geographic priorities within the study area.

1.4 The OHI Toolbox

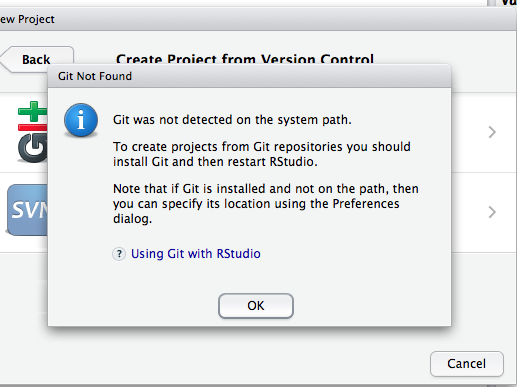

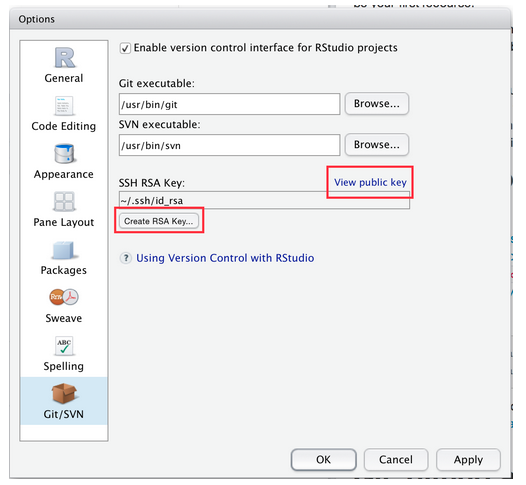

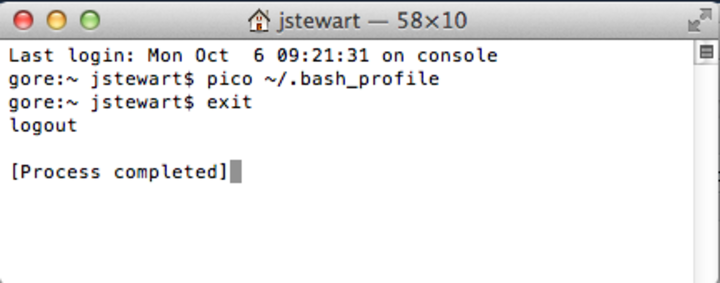

The OHI Toolbox is made to organize and process data, document decision making, calculate scores, and share results within or outside your team. It was created to facilitate score calculations as well as the organization of information and transparency of the entire workflow. The Toolbox is built with open-source, freely available software, particularly Github and RStudio.

Github and RStudio are a highly effective combination for your team to conduct your OHI+ assessment in a collaborative, transparent, and reproducible manner. RStudio is the coding environment for the programming language R and is where all the data processing, calculations, and writing are done. Github is a online version-control system where each change you save in RStudio is recorded through time and easily traceable. Multiple people can therefore collaborate on the same project and view the changes done by one another. Github also provides a platform for decision-making conversations, similar to email threads but better kept and organized.

Read APPENDIX 1: Toolbox Software for more details on their features, how they work together, and how to set them up your own computer.

Treat the toolbox as your notebook, calculator, and presentation of your work. No more endless email chains or passing spreadsheets back and forth! If someone wants to see where your data comes from, how you have processed the data, the rationale for including or excluding certain data, and how the scores are calculated, they can find the answers from your work. It increases the credibility and reproducibility of your assessment.

It also makes your technical team more stable.

The Toolbox will also preserve team memory. If there are personnel changes, it is easy for any new member to pick up where it was left when your data preparation has been documented clearly and kept in one place. It will also help your “future self”: months or years later you can revisit your work and understand what you have done.

Your Toolbox is organized into two sequential repositories where you will store all your work. They will be tailor-made for your OHI+ assessment upon request.

2 Requesting your repositories

Using the OHI Toolbox for your assessment is divided into two steps. When you decide to conduct an OHI+ assessment, and even before you have defined your regions, we can immediately provide you with a Starter Github repository (Starter Repo) to get you familiar with the Github/RStudio workflow, and to help you through the data exploration and region-defining stage.

Once you have finalized your regions and provide us with appropriate files (see next section), we will upgrade you with a Full repository (Full Repo) with pre-populated data layers extracted from the most recent Global OHI assessment.

2.1 The Starter Repo

2.1.1 Why a Starter Repo?

The purpose of this repository is to help you learn the Github/RStudio workflow, and to organize and explore available data to help finalize the spatial boundaries you are considering. We highly encourage you to code this exploration in R.

Learn Github/RStudio and collaborate with your team

OHI promotes open science where detailed information about how the assessment is conducted is documented and shared. Providing public access to your input data, computational code, as well as rationales of each step of the assessment is becoming the standard for scientific inquiry, so every effort should be made to achieve those aims. Github/RStudio is a powerful combination that organizes and processes information for this purpose and greatly increases the efficiency of conducting repeated and reproducible assessments, and is the backbone of the OHI Toolbox.

The Starter Repo will get you familiar with this system, and help you develop an efficient workflow to script data exploration and document your decision-making process at once. Furthermore, the scripting language can be directly rendered as webpages, PDFs, presentations, or Word documents for internal and public communication!

Prepare and Organize Data

Data preparation (formatting, exploring, plotting data) takes the largest amount of time in all OHI assessments. An OHI assessment deploys from dozens to more than a hundred data layers coming from as many public data sources. Very rarely can raw data be used in the format you receive them; they require a significant amount of cleaning and formatting before they become usable OHI data layers. During that exploration process, you’ll likely need input from colleagues or outside experts and go through rounds of revisions. Instead of trying to track changes between dozens of data files and long email chains yourself, use Github’s version control system that saves each version automatically. You can document conversations and decisions alongside your R code.

Scripted data exploration (e.g. done in

R) is useful whether you decide to use the data in your assessment or not. If you use the data, you have already begun preparing it for the Toolbox. And if you don’t use the data, it can be very important to be able to communicate why.

Finalize spatial boundaries

OHI scores are calculated for regions with clearly defined boundaries, and you will use your Starter Repo to finalize them. Spatial boundaries are often set based on jurisdictions (i.e. within which boundaries would OHI scores be of interest) and data availability (i.e. within which boundaries are data reported) See above section for more details. The Defining Spatial Boundaries section provides instructions of what you need to consider and the files you need to provide to the OHI Team. Once you provide final files, the OHI Team will be able to create your Full Repo.

You could also start exploring goal models, which will reduce the amount of work you will do when you receive the Full Repo for scores calculation.

2.1.2 What’s in the Starter Repo?

The Starter Repo simply contains a prep folder, which includes folders to organize, document, and explore data for:

- each goal or subgoal

- pressures

- resilience

Within each folder, it’s up to you how to populate and organize the contents. We recommend that within each folder you save the raw data files if possible, and create a data preparation script (eg. CW_data_prep.R or CW_data_prep.Rmd for Clean Water) to explore data and document decision making.

2.1.3 How to request a Starter Repo?

You can create a GitHub account at http://github.com with a username and password. To request a repo, email info @ohi-science.org with three things:

- your Github username

- the name of your assessment area (eg. the Gulf of Guayaquil)

- a shortened name for the repository (our convention is a 3-letter code). For example, the Gulf of Guayaquil assessment had

gyeas their code.

2.2 The Full Repo

2.2.1 Why a Full Repo?

After you have explored your data and finalized spatial boundaries, the Full Repo offers the rest of the scripts and files you need to complete your OHI+ assessment.

The Full Repo is a repository pre-populated with R scripts and data layers disaggregated from the most recent global OHI assessment, structured in the same way as the global OHI assessments. This way you don’t need to start your assessment from scratch; you can explore a working repository and build from there. For example, instead of writing scripts for goal models from the beginning, you can modify existing scripts to suit your own needs. Data layers disaggregated from the global assessments are available to use, however, we do recommend that you replace as many global data with higher-resolution local data as possible.

2.2.2 What’s in the Full Repo?

The Full Repo contains all the files you need to calculate scores, and produce figures and reports:

- data layers are organized into one folder (

layers), with a registry that lists attributes about them and what they are for (layers.csv) - goal models are organized in one file (

functions.r) in the configure (conf) folder - pressures and resilience matrices that indicate which pressures/resilience apply to which goal, also in the configure (

conf) folder - scripts that use

ohicore, anRpackage built by the OHI Team to calculate OHI scores, create visuals and other core operations.

You can see a full description of each file and script and how they are organized in the File System section.

2.2.3 How to request a Full Repo?

To request a Full Repository, you will need to email info @ohi-science.org with:

- shape files of your finalized spatial boundaries

- the name of your scenario, which is often the definition of your assessment region and the year (e.g. province2016, region2015)

We can then provide you with a Full Repo with the regions defined and pre-populated data layers extracted from the most recent global assessment according to your regional boundaries.

3 Starter Repository

In the Starter Repo, you will have one main prep folder, where you will organize and explore available data and finalize spatial boundaries while learning the Github/RStudio workflow. To be transparent and repeatable, all files should be written in R (or R Markdown).

3.1 Discovering and gathering input information

To promote transparent communication and aid in reproducibility, it is always a good practice to record information about data sources (i.e. ‘metadata’) and explanation of how they are processed in the script. For example, it is important to include:

- data source

- data url or website

- date accessed, contact information

- processing plan

A hallmark of the OHI is that it uses freely-available existing information (data and indicators) to create the models that capture the philosophies of individual goals. The quality of the inputs are important because calculated OHI scores area only as good as the inputs on which they are based, and it is important to identify what was included (and excluded). Assembling the appropriate input information, which means discovering, gathering, and processing data and indicators, is critical to any OHI assessment.

Reading Best practices for assessing ocean health in multiple contexts using tailorable frameworks can help you plan what information to look for. Then, after your team has tailored the OHI framework for your study area and identified the information that ideally would be included, the data discovery and gathering process can begin. There are many decisions to make when deciding which data are available and appropriate to include in your assessment. Finding appropriate data requires problem-solving abilities and creativity, particularly when ideal data are unavailable. You will need input information to calculate status models as well as pressures and resilience.

3.1.1 Thinking creatively

Humans interact with and depend upon the oceans in complex ways, some of which are easy to measure and others of which are harder to define. More familiar measurements include providing seafood, or disposing of waste. A less familiar measurement is how marine-related jobs affect coastal communities, or how different people receive or perceive benefits simply from living near the ocean. Thinking creatively and exploring the information available can make your assessment more representative of reality.

Data used in OHI assessments spans a wide array of disciplines beyond oceanography and marine ecology. It is important to think creatively and beyond the interests of a specific institution or one particular field of study. Therefore, it is necessary to look beyond the most known or obvious data sources to find data relevant for the goals in the study area. Discussions with colleagues, literature searches, emails to experts, and search engines are good ways to understand what kinds of data are collected and to hunt for appropriate data. Investigate what kinds of information are available from government and public records, scientific literature, academic studies, surveys and reports, etc.

3.1.2 Data sources

Existing data and indicators can be gathered from many sources across environmental, social, and economic disciplines. This includes government reports and project websites, peer-reviewed literature, masters and PhD theses, university websites, and information from non-profit organizations, among others.

All data must be rescaled to specific reference points (targets) before being combined with the Toolbox; therefore setting these reference points at the appropriate scale is a fundamental component of any OHI assessment. This requires your assessment team to capture the philosophy of each Index goal and sub-goal using the best available data and indicators. Indicators that are already scaled (e.g., from 0-1 or 0-10) can easily be incorporated into your assessment; they have already been scaled to some kind of identified target or reference point. Data that have not been scaled in most cases will need to be, whether this is by scaling to the maximum value in the range or to some other understood value. You should think about how you would rescale data during your search.

Because data and indicators will come from different sources, they will also have different formatting. To include these data and indicators in your assessment, you will need to process these files into the format required by the Toolbox, which is explained in the section Formatting Data for the Toolbox. When data have been prepared and formatted for the Toolbox, they are called layers. Because creating layers can be quite time-intensive, data should only be prepared for the Toolbox after final decisions have been made to include the data or indicator in your assessment, and after the appropriate goal model and reference points have been finalized.

3.1.3 Gathering responsibilities

Gathering appropriate data requires identifying and accessing existing data. It is important that team members responsible for data discovery make thoughtful decisions about whether data are appropriate for the assessment. Data discovery and acquisition are typically an iterative process, as there are both practical and philosophical reasons for including or excluding data.

3.1.4 Requirements for data and indicators

There are six requirements to remember when investigating (or ‘scoping’) potential data and indicators that are presented in this section. It is important that data satisfy as many of these requirements as possible. To meet these requirements, you may have use appropriate methods to fill gaps in the data set. Data sources may need to be excluded from the analyses if requirements are not met and gap-filling solutions are not possible. If data cannot be included, you may elect to use layers from the global assessment or identify other data or modeling approaches.

3.1.5 Relevance to ocean health

There must be a clear connection between the data and ocean health, and determining this will be closely linked to each goal model.

3.1.6 Accessibility

The two main points regarding accessibility are whether the source is open access and whether the data or indicators will be updated regularly.

The Index was created in the spirit of transparency and open-access, using open-source software and online platforms such as GitHub, is to ensure as much accessibility and open collaboration as possible. Data and indicators included should also follow these guidelines, so that anyone wishing to understand more about the Index may be able to see what data were used and how. For this reason we emphasize the importance of using data that may be made freely downloadable, as well as the importance of clearly documenting all decisions and reasons for the choices made in selecting data, indicators, and models.

Index scores can be recalculated annually as new data become available. This can establish a baseline of ocean health and serve as a monitoring mechanism to evaluate the effectiveness of actions and policies in improving the status of overall ocean health. This is good to keep in mind while looking for data: will it be available again in the future? It is also important to document the sources of all data so that it is both transparent where it came from and you will be able to find it in the future.

3.1.7 Quality

Understanding how the data or indicators were collected or created is important. Are they collected by a respected organization with quality control? Are there any protocol changes to be aware of? For instance, were there changes in the collection protocol to be aware of when interpreting temporal trends?

3.1.8 Reference point

Most data will need to be scaled to a reference point. As you consider different data sources it is important to think about or identify what a reasonable reference point may be. Ask the following types of questions as you explore data possibilities:

- Has past research identified potential targets for these data? For example, fisheries goal require a Maximum Sustainable Yield (MSY).

- Have policy targets been set regarding these data? For example, maximum levels of pollutants allowed in beaches.

- Would a historic reference point be an appropriate target? For example, the percent of habitat coverage before coastal development took place.

- Could a region within the study area be set as a spatial reference point? For example, a certain region is regarded as the leader in creating protected areas.

3.1.9 Appropriate spatial scale

Data must be available for every region within the study area. It is not always possible to fully meet the spatial and temporal requirements with each source. In these cases, provided that the gaps are not extensive, it can still be possible to use these data if appropriate gap-filling techniques are used (See: Formatting Data for Toolbox section).

3.1.10 Appropriate temporal scale

Data must be available for ideally the five most-recent years to calculate the recent trend. For some goals, where temporal reference points are desirable, longer time series are preferable.

3.1.11 The process of information discovery

The most important thing to remember when gathering data and indicators is that they must contribute to measuring ocean health. Not all information that enhances our knowledge of marine processes directly convey information about ocean health and may not be appropriate within the OHI framework. Because of this, compiled indicators can sometimes be more suitable than raw data measuring single marine attributes.

Whether you are working goal-by-goal, or layer by layer, it is important to consider where you can find synergies in data discovery. For example, while you are looking for information for the fisheries goal, you may also find data layers for fishing pressures, such as metrics on bycatch or trawling intensity. This will save you time and allow you to start thinking about how to rank pressures and resilience weights on your goals as well. Conceptually, it will help your team build a picture of how your goals are interlocking in a way that is reflective of the actual linkages that exist in the connected systems you are studying. Some key examples are listed below, and are further explained in the following sections.

You should begin by understanding and comparing the best approaches used in assessments that have been completed, including the global assessments (Halpern et al, 2012; 2013), Brazil (Elfes et al. 2014), Fiji (Selig et al., 2014), and the U.S. West Coast Assessment (Halpern et al., 2014). For OHI+ assessments, if finer-resolution local data were available in the study area, these data were either incorporated into modified goal models that used locally appropriate and informed approaches or into the existing global goal model. When local data were not available, the global-scale data and global goal models were used, which is least desirable because it does not provide more information than the global study.

When looking for data, the following decision tree may be useful when going goal-by-goal for discovering data and developing models:

3.1.11.1 Example: U.S. West Coast data discovery

Below are examples of some decisions made when exploring available data for the U.S. West Coast assessment. Determining whether certain data could be included began with a solid understanding of the layers and models included in the global assessment. Since the U.S. West Coast is a data-rich region, finer-resolution local data could be used in place of many of the global data layers. The U.S. West Coast assessment had five regions: Washington, Oregon, Northern California, Central California, and Southern California.

There are a lot of existing data that contribute to our scientific understanding of ocean processes and interactions but are not ideal for the OHI. Reasons to exclude data are both due to practical requirements (e.g., spatial or temporal resolution) and philosophical requirements (i.e., they do not help capture the attributes of interest for assessing ocean health). Some common reasons for excluding data are:

The data do not cover the entire area of the reporting region. The state of California had excellent, long-term data on public attendance at state parks that would have been quite useful in the calculation of the tourism and recreation goal. However, data were only available for three of the five regions (the three California regions but not Oregon and Washington), so they could not be used.

There is not a clear and scientifically observed relationship between the data and ocean health. Along the U.S. West Coast, kelp beds are a very important habitat because of their contribution to biodiversity and coastal protection. However, kelp coverage variation is driven primarily by abiotic natural forcing (wave or storm disturbance and temperature) and thus is not a good indicator of kelp forest health, particularly in the case of anthropogenic impacts. For these reasons kelp coverage was not included in the assessment.

The feature being measured may provide benefits to people, but this feature is not derived from marine or coastal ecosystems. Sea walls and riprap provide coastal protection to many people along the U.S. West Coast. However, these structures are not a benefit that is derived from the marine ecosystems, so only coastal habitats were included in the calculation of this goal. These data can be included as a pressure due to habitat loss. They were not used as a resilience measure because they can often have negative side effects (e.g., by altering sedimentation dynamics), and because they have limited long-term sustainability (i.e., they need maintenance).

Data collection is biased and might misrepresent ocean health. The U.S. Endangered Species Act identifies a species list focused on species of concern within the US. As such, these data are biased in the context of ocean health since they only assess species whose populations may be in danger. For the calculation of the biodiversity goal, using these data would be inappropriate because this goal represents the status of all species in the region, not just those that are currently of conservation concern. Using these data may have shown the status of biodiversity to be lower than it really is because the selection of species to assess was already biased towards species of concern.

Time series data are not long enough to calculate a trend or a reference point (when a historical reference point is most appropriate). For the U.S. West Coast, the current extent of seagrass habitats was available, however, these do not exist for previous points in time in most areas, so could not be used to calculate the trend or set a historical reference point. Therefore, we estimated the trend in health of seagrass habitats using as a proxy the trend in the main stressor (i.e., turbidity). In other words, we assumed that the rate of seagrass loss was directly proportional to the rate of increase in turbidity. Similar solutions may be used to estimate trends in your own assessment, if there is scientific support for assuming that the trend of what we want to assess (or the relationship between the current state and the state in the reference year) has a strong relationship with the trend of the proxy data available.

3.2 Formatting Data for the Toolbox

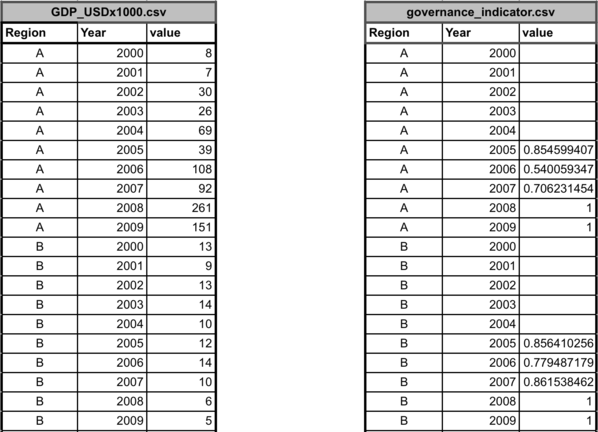

The OHI Toolbox expects each data layer to be - in its own .csv file, - with data available for every region within the study area, - with data organized in ‘long’ format (as few columns as possible), and - with a unique region identifier (rgn_id) associated with a single score or value.

As you discover and gather potential data sources, they can be prepared and explored in the Starter Repo. Data preparation is done in R using input data stored in .csv files (or ‘comma-separated value’). These files can be opened as a spreadsheet using Microsoft Excel or similar programs. Each data layer (data input) has its own .csv file, which is combined with others within the Toolbox for the model calculations. These data layers are used for calculating goal scores, meaning that they are inputs for status, trend, pressures, and resilience. The global analysis included over 100 data layer files, and there will probably be as many in your own assessments. This section describes and provides examples of how to format the data layers for the Toolbox.

It is highly recommended that layer preparation occurs in your repository’s

prepfolder as much as possible, as it will also be archived by GitHub for future reference. The folder is divided into sub-folders for each goal and sub-goal, where you will upload the raw data and manipulate the data indata_prep.Rscripts.

OHI goal scores are calculated at the scale of the reporting unit, which is called a ‘region’ and then combined using an area-weighted average to produce the score for the overall area assessed, called a ‘study area’. In order to calculate trend, input data should be available as a time series for at least 5 recent years (and the longer the time series the better, as this can be used in setting temporal reference points).

Finalized data layers have at least two columns: the

rgn_idcolumn and a column with data that is best identified by its units (eg. km2 or score). There often may be ayearcolumn or acategorycolumn (for natural product categories or habitat types).

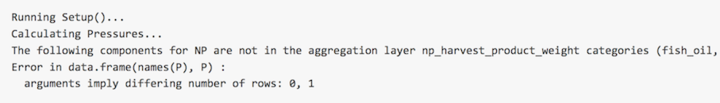

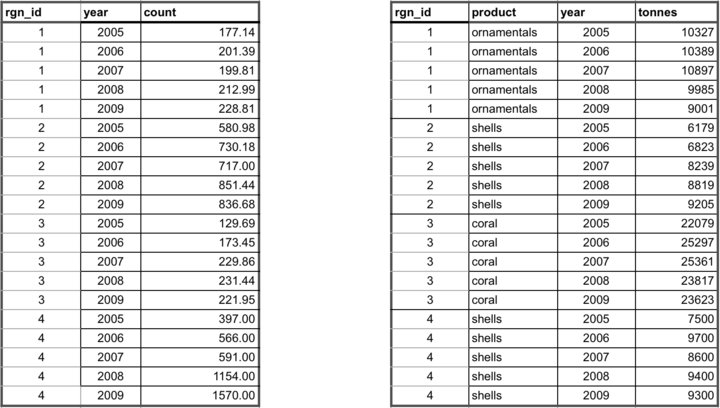

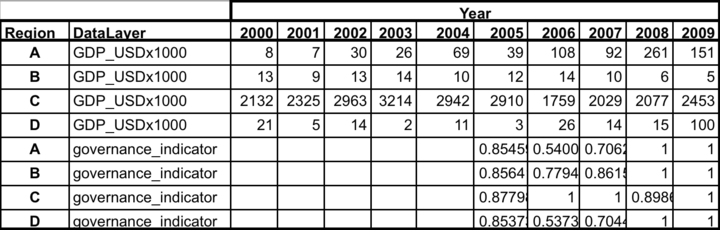

The example below shows information for a study area with 4 regions. There are two different (and separate) data layer files: tourism count (tr_total.csv) and natural products harvested, in metric tonnes (np_harvest_tonnes.csv). Each file has data for four regions (1-4) in different years, and the second has an additional ‘categories’ column for the different types of natural products that were harvested. In this example, the two data layers are appropriate for status calculations with the Toolbox because:

- At least five years of data are available,

- There are no data gaps

- Data are presented in ‘long’ or ‘narrow’ format (not ‘wide’ format – see “Long Formatting”" section).

3.2.1 Uploading and formatting raw data files

Unformatted data files can be uploaded to the pre-proc folder in your github repository and processed with R. Saving raw data in the same repository helps to keep track of how the data has been treated.

Raw files can be uploaded as .csv or .xlsx. However, formatted data has to be saved as .csv in the layers folder.

In addition to pre-proc, a prep folder has been set up for data formatting. Inside the folder:

- several sub-folders exist to house formatted data files for each goal/sub-goal

prep.ris where formatting occurs for each goal/sub-goal.READMEis where you can record information on raw data files and processing for future reference

Example of data in the appropriate format:

3.2.2 Gapfilling

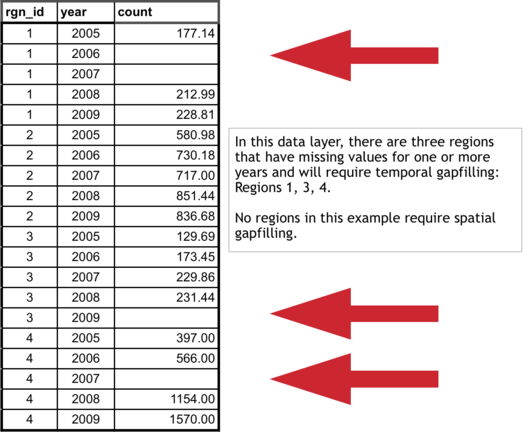

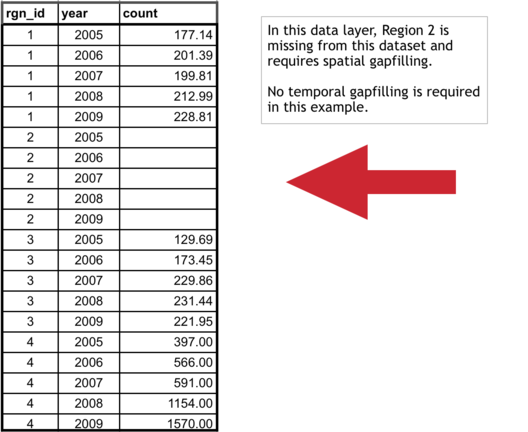

It is important that data prepared for the Toolbox have no missing values or ‘gaps’. Data gaps can occur in two main ways: 1) temporal gaps: when several years in a time series in a single region have missing data, and 2) spatial gaps: when all years for a region have missing data (and therefore the whole region is ‘missing’ for that data layer).

How these gaps are filled will depend on the data and regions themselves, and requires thoughtful, logical decisions to most reasonably fill gaps. Each data layer can be gapfilled using different approaches. Some data layers will require both temporal and spatial gapfilling. The examples below highlight some example of temporal and spatial gapfilling.

All decisions of gapfilling should be documented to ensure transparency and reproducibility. The examples below are in Excel, but programming these changes in software like R is preferred because it promotes easy transparency and reproducibility.

3.2.2.1 Temporal gapfilling

Temporal gaps occur when a region is missing data for some years. The Toolbox requires data for each year for every region. It is important to make an informed decision about how to temporally gapfill data.

Often, regression models are the best way to estimate data and fill temporal gaps. Here we give an example that assumes a linear relationship between the year and value variables within a region. If data do not fit a linear framework, other models may be fit to help with gapfilling. Here we give an example assuming linearity.

Using a linear model can be done in most programming languages using specific functions, but here we show this step-by-step using functions in Excel for Region 1.

Temporal gapfilling example (assumes linearity: able to be represented by a straight line on a graph)):

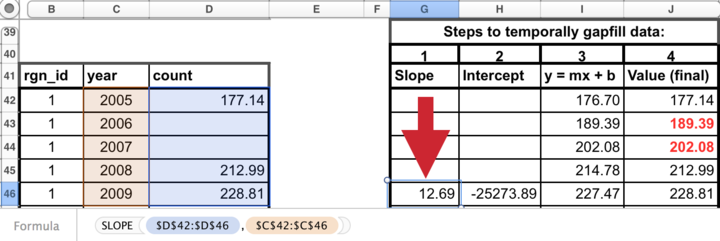

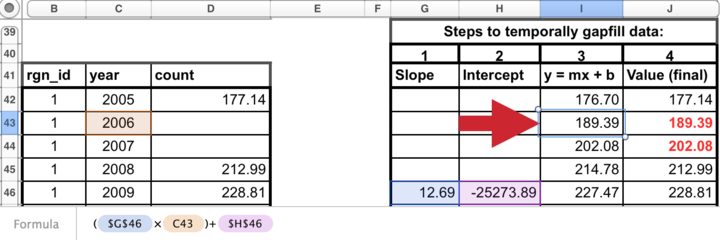

There are four steps to temporally gapfill with a linear model, illustrated in the figures with four columns.

1. Calculate the slope for each region

The first step is to calculate the slope of the line that is fitted through the available data points. This can be done in Excel using the SLOPE(known_y’s,known_x’s) function as highlighted in the figure below. In this case, the x-axis is years (2005, 2006, etc…), the y-axis is count, and the Excel function automatically plots and fits a line through the known values (177.14 in 2005, 212.99 in 2008, and 228.81 in 2009), and subsequently calculates the slope (12.69).

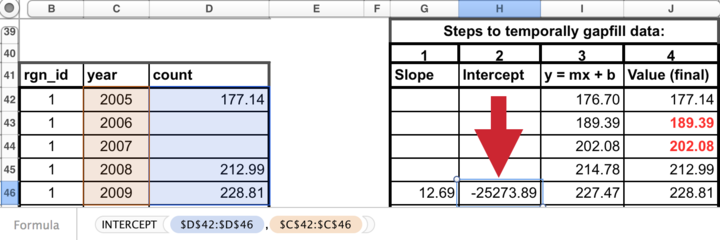

2. Calculate the y-intercept for each region

The next step is to calculate the intercept of the line that is fitted through the available data points. This can be done in Excel similarly as for the slope calculation, using the the INTERCEPT(known_y’s,known_x’s) function that calculates the y-intercept (-25273.89) of the fitted line.

3. Calculate y for all years

The slope and y-intercept that were calculated in steps 1 and 2 can then be used along with the year (independent variable) to calculate the unknown ‘y-values’. To do so, simply replace the known three values into the y = mx + b equation (m=slope, x=year, b=intercept), to calculate the unknown ‘count’ for a given year (189.39 in 2006, and 202.08 in 2007).

4. Replace modeled values into original data where gaps had occurred

Substitute these modeled values that were previously gaps in the timeseries. The data layer is now ready for the Toolbox, gapfilled and in the appropriate format.

3.2.2.2 Spatial gapfilling

Spatial gaps are when no data are available for a particular region. The Toolbox requires data for each region. It is important to make an informed decision about how to spatially gapfilling data.

To fill gaps spatially, you must assume that one region is like another, and data from another region is adequate to be substituted in place of the missing data. This will depend on the type of data and the properties of the regions requiring gapfilling. For example, if a region is missing data but has similar properties to a different region that does have data, the missing data could be ‘borrowed’ from the region with information. Each data layer can be gapfilled using a different approach when necessary.

Characteristics of regions requiring gapfilling that can help determine which type of spatial gapfilling to use:

proximity: can it be assumed that nearby regions have similar properties?

study area: are data reported for the study area, and can those data be used for your regions?

demographic information: can it be assumed a region with a similar population size has similar data?

Spatial gapfilling example:

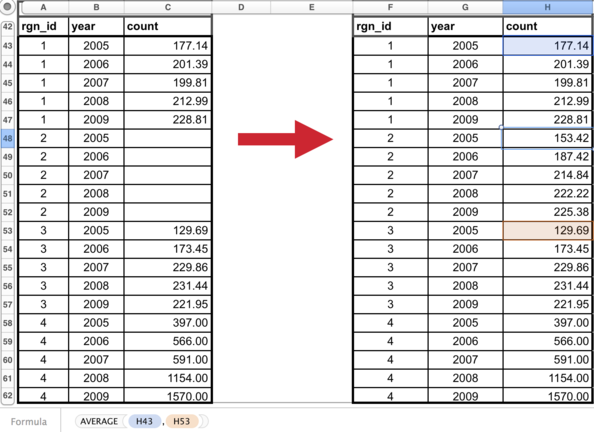

For a certain data layer, suppose the second region (rgn_id 2) has no data reported, as illustrated in the figure above. How to spatially gapfill rgn_id 2 requires thinking about the properties and characteristics of the region and the data, in this case, tourist count.

Here are properties that can be important for decision making:

rgn_id 2:

- is located between rgn_id 1 and 3

- is larger than rgn_id 1

- has similar population size/demographics to rgn_id 3

- has not been growing as quickly as rgn_id 4

There is no absolute answer of how to best gapfill rgn_id 2. Here are a few reasonable possibilities:

Assign rgn_id 2 values from:

- rgn_id 1 because it is in close proximity to rgn_id 2

- rgn_id 3 because it is in close proximity to rgn_id 2 and has similar population size/demographics

- rgn_id 1 and 3 averaged since they are in close proximity to rgn_id 2

Suppose the decision was made to gapfill rgn_id 2 using the mean of rgn_id 1 and 3 since this would use a combination of both of those regions. Again, other possibilities could be equally correct. But some form of spatial gapfilling is required so a decision must be made. The image below illustrates this in Excel.

The data layer is now ready for the Toolbox, gapfilled and in the appropriate format.

3.2.3 Long formatting

The Toolbox expects data to be in ‘long’ or ‘narrow’ format. Below are examples of correct and incorrect formatting, and tips on how to transform data into the appropriate format.

Example of data in an incorrect format:

With ‘wide’ format, data layers are more difficult to combine with others and more difficult to read and to analyze.

Transforming data into ‘narrow’ format:

Data are easily transformed in a programming language such as R.

In R, the tidyr package has the gather command, which will gather the data from a wide format into a narrow format. It also can spread the data back into a wide format if desired. R documentation:

The final step is optional: ordering the data will make it easier for humans to read (R and the Toolbox can read these data without this final step):

Example of data in the appropriate (long) format:

3.2.4 Rescaling your data

An important consideration is how to rescale your data when preparing it for use in the Toolbox. Rescaling involves turning a distribution of data into a value from zero to one. This is based on finding a highest observed or theoretical point in the distribution of the data, and from there, the relative value of the data can be calculated.

3.2.4.1 Example: Global Data Approach

You should base your decision on whether your consider it more appropriate to decide the reference point based on the data distribution of all data points, be they observed or interpolated, or whether we think we should only consider the observed data. If the interpolation covers large areas, and these get assigned values that aren’t very frequent in the observed data, then the two distributions will be very different, and what value is in the 99.99th percentile is different too.

In theory, one would favor deciding the reference point based on as many observations as possible (i.e., interpolate first, then obtain the percentile). In practice, if we think that large interpolated areas are very unreliable, we might prefer to use real observations only (i.e., percentile first, then interpolate).

3.2.5 Naming data layers

Please name each data layer with the following format so it is easy to keep all data organized:

prefix_layername_regionYEAR_suffix.extension

There cannot be any white spaces in any part of the filename: instead, use underscores (’_’).

The prefix will be the letters identifying each goal (two letters) or sub-goal (three letters):

| Goal | Code | Subgoal | Code |

|---|---|---|---|

| Food Provision | FP | Fisheries | FIS |

| Mariculture | MAR | ||

| Artisanal Fishing Opportunity | AO | ||

| Natural Products | NP | ||

| Coastal Protection | CP | ||

| Carbon Storage | CS | ||

| Livelihoods and Economies | LE | Livelihoods | LIV |

| Economies | ECO | ||

| Tourism and Recreation | TR | ||

| Sense of Place | SP | Iconic Species | ICO |

| Lasting Special Places | LSP | ||

| Clean Waters | CW | ||

| Biodiversity | BD | Habitats | HAB |

| Species | SPP |

The layername should be made of words or abbreviations to identify what the layer is (eg. unemployment)

The regionYEAR should identify the assessment scenario: chn2015. This will help separate updated data layers from global data layers (‘glYEAR’).

The suffix of the filename should identify who prepared the data so any questions can easily to sent to the correct person (eg. JL).

The extension identifies the filetype and is separated by a period (.). You must save your files as comma separated values (.csv) since this is the format used by the OHI Toolbox.

Here is an example of a properly named data layer for the tourism and recreation goal, where the data are the percent of unemployment prepared by Julia Lowndes.

tr_unemployment_chn2015_JL.csv

3.3 Defining spatial boundaries

Spatial boundaries of your assessment regions dictate how your data will be aggregated or disaggregated, and are required for getting a Full Repository. OHI scores are calculated for each assessment region, and the region boundaries will be used to aggregate or disaggregate input information reported at different spatial scales. There is no limit to the number of regions in your assessment. However, the number of regions is usually constrained by two things: data availability for those regions, and the utility of having scores calculated for those regions. Ideally, boundaries are drawn per management jurisdiction, and are informed by the scale at which information is available.

The Starter Repository will help you organize preliminary data and make sure data availability matches your desired region assignments. Once you’ve defined your regions and drawn spatial boundaries, the OHI team can create a Full Repository for your assessment.

3.3.1 Balancing geopolitical boundaries and data limitation

The spatial boundaries of OHI regions are typically set based on:

- Management boundaries: geopolitical boundaries, where policy decisions are made (countries, provinces, etc)

- Biogeographic boundaries: based on natural geography (bay, seas, gulf, etc)

- Data: information availability (spatial scale where are data collected)

OHI utilizes data from dozens to >100 data layers collected by many agencies. Some data are collected within boundaries suitable for your desired geopolitical boundaries. Often they are not. For example, you may wish to assess ocean health for each of the ten states along the coast. However, some measurements are taken on the national level and it is difficult to disaggregate data to the state level, or some measurements might only make sense at the watershed level across a few states.

Data disaggregation and gap-filling are possible methods of dealing with missing data, but can dilute the information quality within your assessment. If many data layers don’t fit your desired boundaries, you may consider changing your boundaries. Exploring the data sources in the Starter Repo can help you balance jurisdictional boundaries and information availability, and finalize spatial boundaries that make the most sense for the purpose of your assessment.

Note that the OHI does not take a stance on disputed territories. The boundaries are defined by the original map data providers.

3.3.2 Drawing spatial boundaries

Regions must be unique (non-overlapping), and boundaries must be drawn offshore. Offshore boundaries should be made with spatial methods in order to extend boundaries from those designated on land.

Spatial boundaries must be drawn with geographic information system (GIS) mapping software such as ArcGIS, QGIS, or GRASS. You will need someone with GIS skills to create a shapefile that will be used by the Toolbox to display your information. The shapefile will also be used to extract information for each of your defined regions when data are reported in raster format for a different area. For more information see Wikipedia for Geographic information systems and Shapefiles.

Illustrated below are the conceptual steps required for creating a spatial file with your offshore boundaries. You will ultimately decide where to draw the offshore boundaries. The example below shows a simple example of extending the land boundaries in a straight line; you should consult with a GIS specialist to make sure any extensions make sense with the coastline and natural features, and also make sure they does not conflict with important jurisdictional boundaries.

Data for different goals often cover different spatial extents offshore. For example, Fisheries might use data from the entire EEZ, while Carbon Storage might only cover 3nm from shore. When mapped, OHI scores do not show different spatial extents, but instead show all to the greatest spatial extent. Exclusive Economic Zone (EEZ) boundaries are most often the greatest extent offshore that OHI scores will represent. These regional spatial boundaries do not affect data preparation and analyses, where you could use any spatial extent appropriate for each goal.

One possible method is to create boundaries with Thiessen Polygons, and we provide a Python script that can be used, but it requires ArcGIS. The Python script and further details can be found here.

To create a repository for your assessment, we just need the off-shore marine water boundaries. But you may also make inland buffers for your analyses. For example, do you want coastal population to include activities within 1km of the coast? Or 10km?

3.3.3 Request a Full Repository with offshore boundaries

In order to create a Full Repository for your assessment, we will need the shapefile for your offshore boundaries (and the name of your scenario, which is to the unit of your assessment and the year, for example, province2016). This will help us disaggregate global data to your local regions and populate usable data layers. Please send a .zip file of all files produced to info@ohi-science.org. Files with the following extensions are required (but you can send all files):

.dbf.shp.shx.prj

The .dbf file needs the following in its attribute table:

- rgn_id (unique numeric region identifier)

- rgn_name (unique named region identifier)

- area_km2 or area_hectare (area in km2 or hectares)

3.4 Developing Goal Models, Reference Points, and Pressures and Resilience

Once you have determined which goals are assessed and have begun searching for data and indicators, you can start to develop goal models and set reference points. The decision tree of the data discovery process also applies here: first consider how goals can be tailored to your local context before you consider replicating what was done in the Global Assessments. It is always better to use local goal model and reference point approaches where possible. This section aims to provid you with goal-by-goal guidance on how to find data, pick indicators, set reference point, and develop the model, as well as guideline on how to think about pressure and resilience. But first, let’s see some general tips before diving into the details of each goal model.

3.4.1 Developing multiple goal models at the same time

Goals can be assessed independent of one another. As each goal model is developed and data gathered, it can be assessed without affecting other goals.

However, you can develop some goal models simultaneously and streamline the data search. For example, the habitat-based goals Carbon Storage, Coastal Protection, and the Habitats sub-goal of Biodiversity all rely on the same underlying data, and their models can be developed together. A spatial analyst can create the spatial layers used for these goals with the same source material. This will greatly expedite your data layer preparation. Species data for Iconic Species sub-goal of Sense of Place is often a subset of data from Species sub-goal of Biodiversity. Data for non-food marine products for Natural Products and food products for Fisheries sub-goal of Food Provision are often recored in similar data sources and may need partitioning.

If you wish to further coordinate these activities on a higher level, you could have the same team member coordinate activities for the development of certain goals. That is a consideration when assembling your team and planning your workflow. For more details, please see the goal-specific sections.

3.4.2 Keeping Reference Points in Mind

Setting a reference point is required for every goal model you develop. It is an “ideal” condition, or target, where the goal is considered to be achieved to its full potential. Achieving or exceeding the reference point will result in a score of 100. The choice of a reference point will thus affect how the final scores are calculated, and must be balanced between knowledge of the system, expert judgment, and limitations of the data. You may set an universal reference point for all regions in your study area, or you may set a unique reference point for each region.

Generally there are four types of reference points: + Functional: Scientifically sound target set based on the known responses of variables measured, such as Maximum Sustainable Yield. + Temporal: A historical benchmark is used as a the “ideal” point in the past, such as mangrove coverage in the 1980’s. + Spatial: A region within the study area with the highest input values, and all others are scaled to it. + Established target: Such as a sustainable catch yield by a certain year, or the number of people employed in a marine sector by a certain year.

Which type of reference point to use depends on the goal and available data. How many years of data are available? Can you set a temporal reference point with these data, or do you have to find another dataset or other source of information? In any case, you must balance being realistic with being ambitious. We suggest following the S.M.A.R.T. criteria when choosing a reference point: “Specific,” “Measurable,” “Ambitious,” “Realistic,” and “Time-bound.”

You will learn more, and think more critically about reference points, as you develop the data layers for your assessment.

How to use the reference point in a model

It’s best to explicitly include the reference point in the model equation whenever possible. For example, the Carbon Storage goal model in the global assessment is written like this:

,

,

where Cr is the reference condition of each habitat. See goal-specific sections for more examples.

3.4.3 Identifying pressures and resilience

While you are developing goal models, you should note the links between your goals and pressures and resilience: both the pressures and resilience that affect them and whether the goal acts as pressure or resilience on other goals. It is recommended to begin gathering data of pressure and resilience from the start of the assessment. The team members who are developing specific goals should think about the pressures that act upon those goals as they are data-gathering, and they should think about the data sources that could provide pressures information. However, it may be most useful when one team member gathers all of the data for pressures, since the same pressures often affect multiple goals. See Pressure and Resilience section of this chapter for more information.

Some pressure data are the same or closely-related to data for goals. For example, the Wild-Caught Fisheries goal model requires catch data, which may be the same data source for information on commercial high- and low-bycatch data, which are used as pressures layers that affect Livelhoods and Economies and Biodiversity. In global assessments, the Clean Waters goal is very much linked to pressures layers because the input layers for its status are used as pressure layers. Trash pollution is a pressure that affects Tourism and Reacreation, Lasting Special Places, Livelihoods and Economies, and Species. It is important to remember these linkages as you go through the data discovery process.

You should also start searching for pressures data independent from data for goals. An example would be how climate change impacts will appear in various places in your assessment. Climate change pressures layers can include UV radiation, sea surface temperature (SST), sea-level rise (SLR), and ocean acidification, and these impacts might affect such goals as Natural Products, Carbon Storage, Coastal Protection, Sense of Place, Livelihoods and Economies, and Biodiversity. These linkages will become more clear as you go through the OHI+ assessment process.

4 Full Repository

4.1 File system organization

This section is an orientation to the files within your Full Repository. The file system organization is the same for all assessment repositories, and can be viewed at github.com/OHI-Science or on your computer. While reading this section it is helpful to explore a repository at the same time to become familiar with its contents and structure. The following uses the assessment repository for Ecuador (ecu) as an example, available at www.github.com/OHI-Science/ecu.

Most of your time will be spent preparing input layers and developing goal models. You will also register prepared layers to be used in the goal models. This all will be an iterative process, but generally speaking you will work goal-by-goal, preparing the layers first, registering them, and then developing the goal models in R. to calculate the scores.

4.1.1 Main folders within your Full Repo

The scenario folder is the most important folder within the repository; by default it is named regionYEAR (eg. cnc2016 for New Caledonia 2016) to indicate that the assessment is conducted at the region scale (province, state, district, etc.), based on input layers and goal models used in the most recent global assessment. It contains all of the inputs needed to calculate OHI scores, and you will modify these inputs when conducting your assessment. The scenario folder is explained in detail in this section.

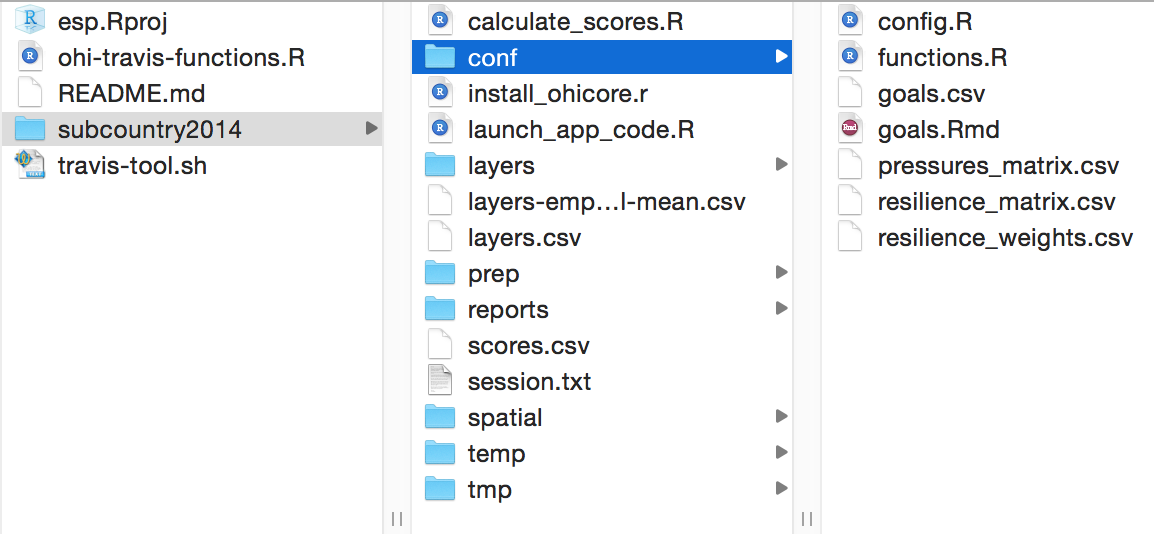

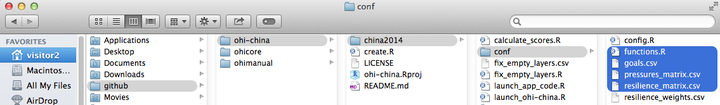

Navigating the assessment repository. The figure shows Mac folder navigation above and Windows navigation below.

When conducting your assessment, you can rename your scenario folder to reflect the subcountry regions in your study area and year the assessment was completed. For example, province2015 would indicate the assessment was conducted for coastal provinces in the year 2015.

Once you complete your assessment with the regionYEAR (or equivalent) scenario, further assessments can be done simply by copying the regionYEAR folder and renaming it. This can be done for future assessments, for example assessment2016 or assessment2018, which eventually would enable you to track changes in ocean health over time. You can also copy scenario folders to explore different policy and management scenarios, for example regionYEAR_policy1.

This figure illustrates the files contained within the assessment repository’s regionYEAR scenario folder, and in which step of the Toolbox workflow they are associated with. Important files are either .csv text files or .R script files. Files are organized into different folders within the regionYEAR folder, and you will modify some of these files while leaving others as they are.

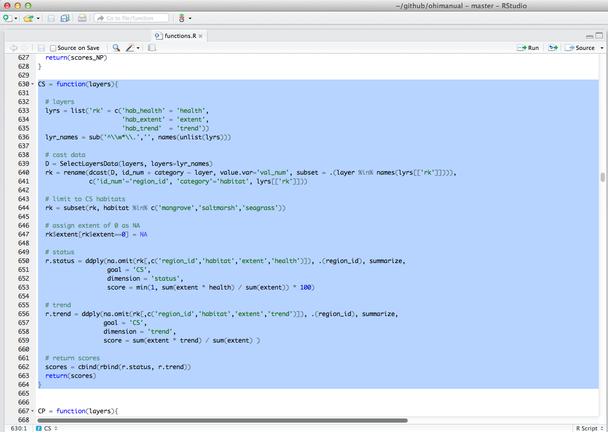

The conf folder within the regionYEAR scenario folder, the conf folder includes important configuration files required to calculate OHI scores. Most of the maneuvering in this phase is done within this folder. There are both .R scripts (config.R and functions.R) and .csv files (goals.csv, pressures_matrix.csv, resilience_matrix.csv, and resilience_weights.csv), which will be introduced individually in the next section.

The conf folder contains important R functions and .csv files.

The prep folder is important in the beginning of your assessment, as it is where you will store and manipulate raw data to get them ready for calculations.

Over all, all the main files you will encounter are either of the two file types:

- ** .csv files** contain data inputs or configuration information.

- ** .R scripts** are written in the programming language R and use data inputs for processing and calculations.

We will introduce those files below roughly in the order and the frequency of use:

4.1.2 Data layers

- raw data files in

prepfolder layersfolderlayers.csvgoals.csvlayers-empty_swampping-global-mean.csvpressures_categories.csvpressures_matrix.csvresilience_categories.csvresilience_matrix.csvscores.csv

raw data layers in “prep” folder

This is where you will store (and manipulate) raw data files before modifying goal models. We recommend separating data layers into different folders by goal.

layers folder

This folder contains all layers required to calculate goal scores, and each layer is an individual .csv file. The names of the .csv files within the layers folder correspond to those listed in the filename column of the layers.csv. All .csv files can be read in R, or with text editors or spreadsheet editors like Microsoft Excel.

The layers folder contains every data layer as an individual .csv file. Mac navigation is shown on the left and Windows navigation is shown on the right.

Note that each .csv file within the layers folder has been formatted consistently. The Toolbox expects all data layers to be in the correct ‘long format’ and in separate files. Each file also has a column with unique region identifier (rgn_id). These numeric region identifiers have region names associated with them, that are set in rgn_labels.csv and can be modified.

TIP: You can check your region identifiers (rgn_id) in the

rgn_labels.csvfile in thelayersfolder.

/glYEAR and /scYEAR suffixes

In your repository, layers are provided for your country based on input information from the YEAR global assessment. The global assessment had information for your country at the the spatial scale of the entire country, whereas your assessment has information for each subcountry region within your country. In most cases, layers from the global assessment was allocated equally to all regions in your study area (country). When this occurred, the layer was given a suffix of _glYEAR to indicate that information is equal across all regions in the study area. While these layers may not provide much useful information to your assessment, the proper input structure is provided in these layers. Some layers contain information such as km2 of habitat that could not be equally allocated across all regions since this would provide a sum much greater than reality. In these cases, layers were down-weighted based on the proportion of offshore area or coastal population density. These layers have the suffix _scYEAR with an indication of what was used to downweight. While this method removes any error of inflated sums and provides the Toolbox with functioning layers, the allocation of these values may not be sensical to your study (i.e. if corals are only present in some regions of your study area but they are allocated to all).

Differences in input layers with equal information for each region (suffixed with _glYEAR) and weighted information for each region (suffixed with _scYEAR).

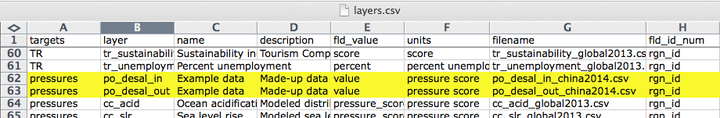

layers.csv

The layers.csv file is the registry and directory that manages all data required for you assessment. All relevant input information is prepared as individual data layers and then registered in this file. layers.csv is vital to organizing all the data to calculate scores and for visualizations

layers.csv is easiest to view in spreadsheet software (i.e. Microsoft Excel). When you open it, you will see that each row of information represents an individual input layer that has been prepared for the Toolbox. The first columns contain information that will be updated by your team as you incorporate modified or new layers: targets, layer, name, description, fld_value, units, filename.; all other columns are generated later by the Toolbox as it confirms data formatting and content and alerts you of any formatting inconsistencies.

- targets indicates which goal or dimension uses the layer. Goals are indicated with two-letter codes and sub-goals are indicated with three-letter codes (see the table just below). Pressures, resilience, and spatial layers indicated separately.

- layer is the identifying name of the input layer that will be used in R scripts like

functions.Rand .csv files likepressures_matrix.csvandresilience_matrix.csv. - name is a longer title of the input layer.

- description is further description of the input layer, including the source of the original data.

- fld_value the values’ units in the input layer. The information in this column must match the column header in the input layer.

- units the values’ units in the input layer. This differs from fld_value above as the units column and has more descriptive naming.

- filename is the input layer itself. This file has input information for each region within the study area, and is located in the

regionYEAR/layersfolder.

| Goal | Subgoal | 2- or 3- letter code |

|---|---|---|

| Food Provision | FP | |

| Fisheries | FIS | |

| Mariculture | MAR | |

| Artisanal Fishing Opportunity | AO | |

| Natural Products | NP | |

| Coastal Protection | CP | |

| Carbon Storage | CS | |

| Livelihoods and Economies | LE | |

| Livelihoods | LIV | |

| Economies | ECO | |

| Tourism and Recreation | TR | |

| Sense of Place | SP | |

| Lasting Special Places | LSP | |

| Iconic Species | ICO | |

| Clean Waters | CW | |

| Biodiversity | BD | |

| Habitats | HAB | |

| Species | SPP |

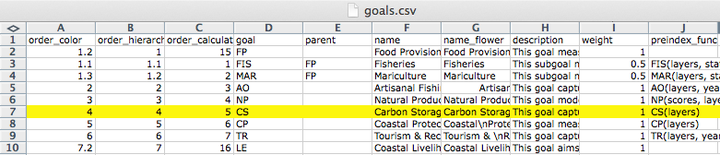

goals.csv

goals.csv is a table with information about goals and sub-goals, including:

- order_color & order_hierarchy: the order to display in flower plots

- order_calculate: the order in which the goals and sub-goals are calculated for the overall index scores

- goal & parent: indicates the relationship between sub-goals and supra-goals (i.e. goals with sub-goals)

- weight: how each goal is weighted to calculate the final Index scores

- preindex_function: indicate what parameters are called to calculate scores for goals and sub-goals in

functions.r - postindex_function: indicate what parameters are called to calculate scores for supra-goals in

functions.r

pressures_categories.csv

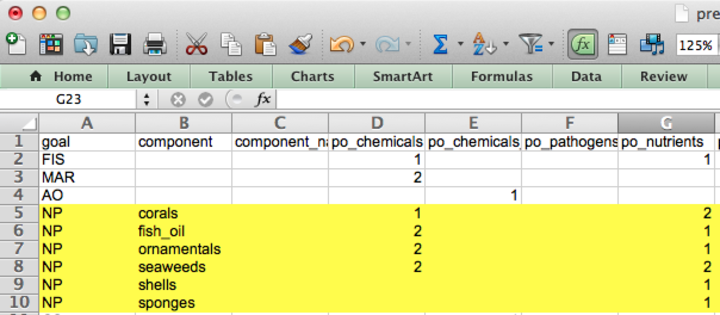

This is a table to record the name of each pressures data layer, its category, and sub-category. Each data layer name is the same name that’s saved in the layers folder and is registered in layers.csv. Each layer falls under one of two categories - ecological or social pressures, and one of several sub-categories to further represent the origin of the pressure (e.g. climate change, fishing, etc), which is also indicated by a prefix of each data layer name (for example: po_ for the pollution sub-category). For more information, see how to modify pressures layers.

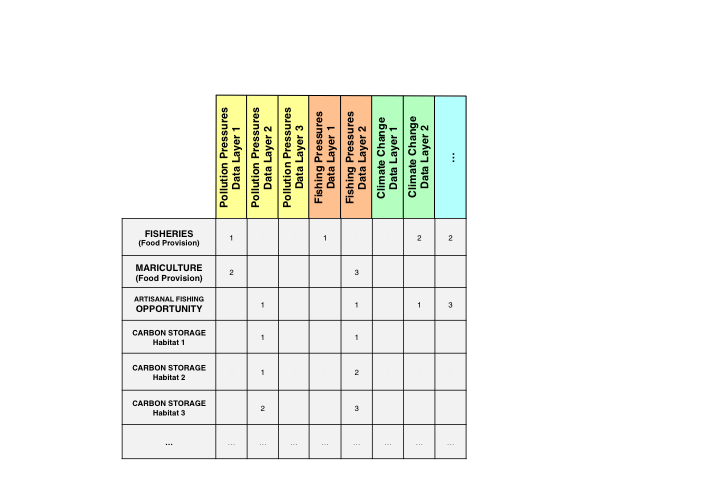

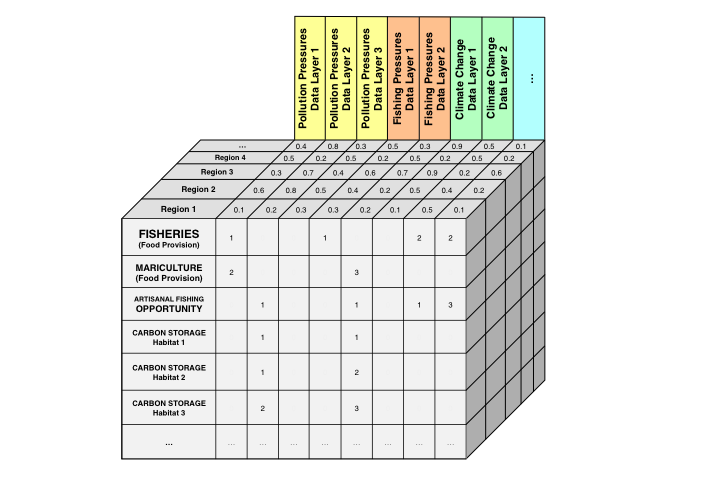

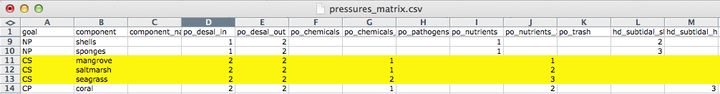

pressures_matrix.csv

It is a table that indicates which individual pressures (stressors) affect which goal, sub-goals, or components, and weights them from 1-3 (a weight of 0 is shown as a blank). A higher weight indicates more negative impacts on that goal or component of the goal. These weights are relative to each row of the matrix (goal, sub-goal, or component). These weights are used in global assessments based on scientific literature and expert opinion, and you can modify the weightings if necessary for your assessment. The pressures matrix is the same as Table S25 in the Supplementary Information for Halpern et al. 2012.

Each pressure (column) of the pressures matrix is the layer name of the pressures layer file that is saved in the layers folder and is registered in layers.csv, matching what’s recorded in the pressures_categories.csv. For more information, see how to modify pressures layers.

resilience_categories.csv

Similar to pressures_categories.csv, this file contains information on each resilience data layer, including its name, category, and sub-category. Each resilience layer’s name is the same as the data layer to be saved in the layers folder and is registered in layers.csv. In addition, it also includes information on category type - ecosystem, regulatory, or social, indicating the origin of the resilience layer.

Each resilience layer is also assigned a weight of 0-1, representing the level of resilience against pressures. Different from the values used in pressures matrix, the resilience weights depend on the level of information available. For more information, read how to modify resilience layers.

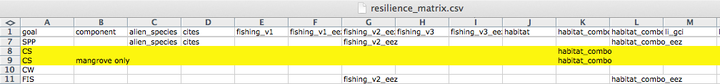

resilience_matrix.csv

It is a table that indicates which individual resilience measures affect which goal, sub-goals, or components. These weights are stored in a separate file in the conf folder: resilience_weights.csv. The resilience matrix is the same as Table S26 in the Supplementary Information for Halpern et al. 2012. For more information, read how to modify resilience layers.

scores.csv

scores.csv is a text file containing the calculated scores for each dimension (future, pressures, resilience, score, status, trend) for each region in the study area. Regions have the numeric identifiers set in regionYEAR/layers/rgn_labels.csv and the study area has the numeric identifier of 0. Scores are calculated with registered layers in layers.csv: when you begin an assessment this will be information for your country from the global YEAR assessment and goal models from the global YEAR assessment.

layers-empty_swapping-global-mean.csv layers-empty_swapping-global-mean.csv contains a list of layers where information for your country was not available for the global assessment. For the Toolbox to be able to run, these layers were filled with averages from all other countries included in the global assessment. This file is not used anywhere by the Toolbox but is a registry of layers that should prioritized to be replaced with your own local layers if you require these layers for the models you develop.

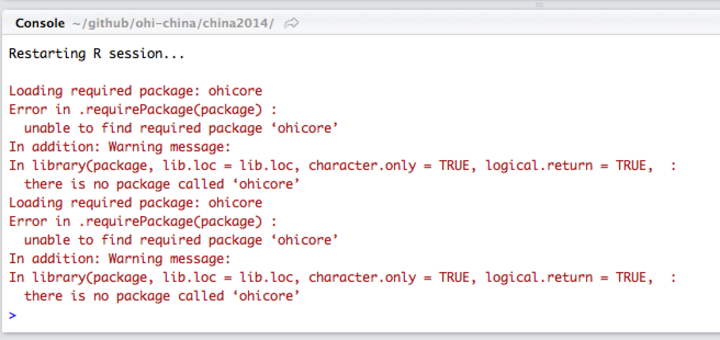

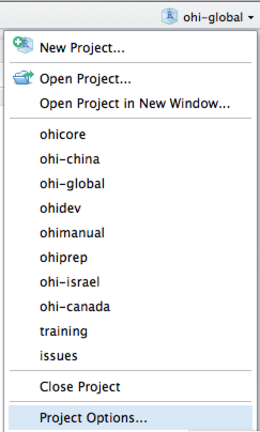

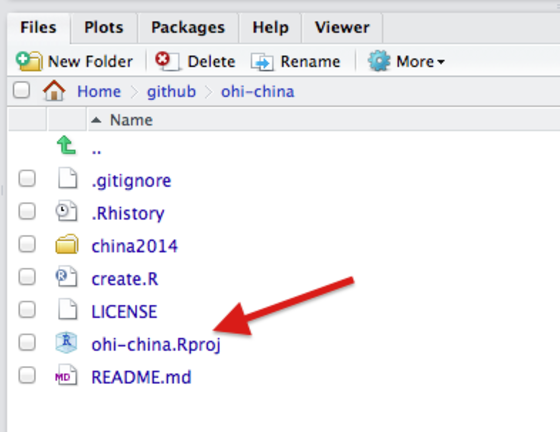

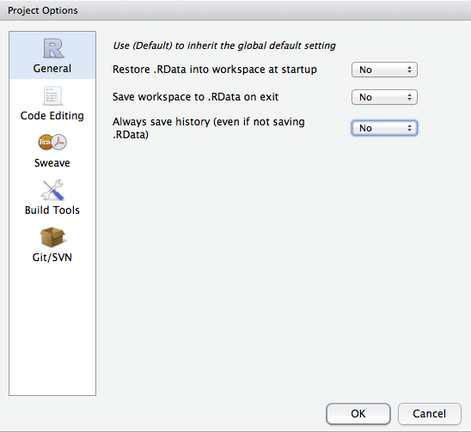

4.1.3 R scripts